INFO Sat 17:29:50 Unrar: Extracting from INFO Sat 17:29:50 Unrar: No files to extract INFO Sat 17:29:50 Unpack for 60.Days.In.264-CRiMSON-postbot requested par-check with forced repairĮRROR Sat 17:29:50Ĝould not delete temporary directory c:temp\tv\60.Days.In.264-CRiMSON-postbot\_unpack: No such file or directory

INFO Sat 17:29:50Ĝhecking pars for 60.Days.In.264-CRiMSON-postbot INFO Sat 17:29:50 Recovery files created by: Created by par2cmdline version 0.8.0. INFO Sat 17:29:51 Performing full par-check for 60.Days.In.264-CRiMSON-postbot INFO Sat 17:29:51 Recovery files created by: Created by par2cmdline version 0.8.0. INFO Sat 17:30:04 Second unpack attempt skipped for 60.Days.In.264-CRiMSON-postbot due to par-check not repaired anything

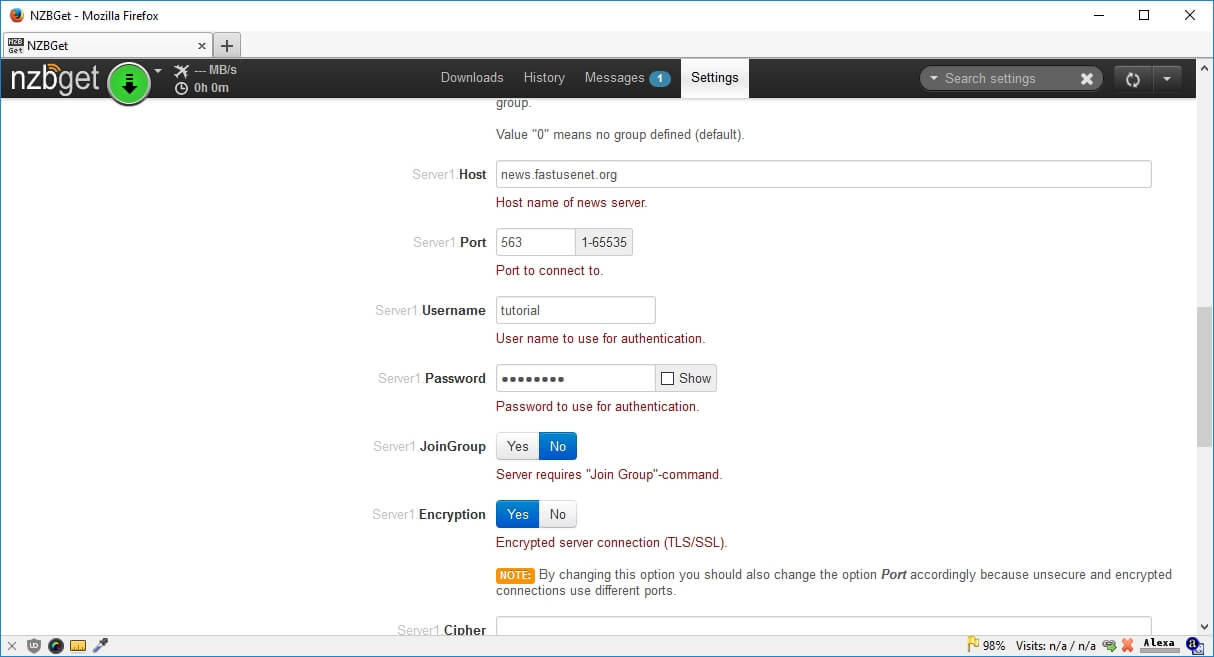

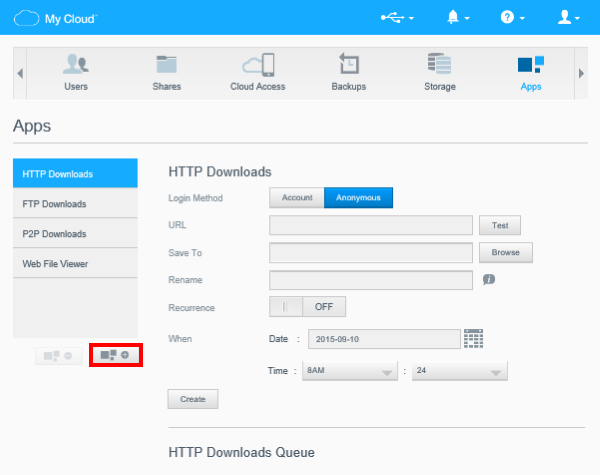

INFO Sat 17:30:04Ĝollection 60.Days.In.264-CRiMSON-postbot added to historyĮRROR Sat 17:30:04 Unpack for 60.Days.In.264-CRiMSON-postbot failed. INFO Sat 17:31:19ĝeleting 60.Days.In.264-CRiMSON-postbot from history INFO Sat 17:31:19ĝeleting file G:\NVIDIA_SHIELD\shows\temp\nzb\60.Days.In.queued INFO Sat 17:31:20Ĝollection 60.Days.In.264-CRiMSON-postbot removed from history It appears i have a post processing error? this is what is happening with the files that are pushed from nzbgeek to nzbget. not sure why they are not being pushed to sabnzb When items are pushed, they only go to nzbget. I have the download clients set up with nzbget and sabnzb might add dog after i can figure this out I have sonarr set up with indexer as nzbgeek. I still haven't quite wrapped my head around how it all works. i must have something set up incorrectly, but i feel i am oh so close. so i am using sonarr, nzbget, nzbgeek, dognzb, and newshosting. this time i would like a bit more automation. i moved recently and am trying to get things set up again.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed